The Moment the Mind Became Measurable

How Claude Shannon’s work led to the information age and gave cognitive science a mathematical foundation.

Probably the most basic idea in science is measurement. You cannot understand or manipulate something reliably without data. Things like mass and volume are easy to measure. But what about information? Before the 1940s, nobody knew how to measure it rigorously, which meant it remained difficult to study scientifically.

Meet Claude Shannon

Let’s get this out of the way… Anthropic’s chatbot, Claude, is reportedly named after Claude Shannon. That should give you an indication of his contribution. His paper and book, A Mathematical Theory of Communication, is one of the most cited scientific papers of all time. One scientist later called it “the Magna Carta of the information age.”

Shannon’s work is the very beginning of a thread that connects many of the ideas we’ll soon discuss.

The question I consistently ask is especially relevant here: how do we know? What makes the science of explanation scientific? Claude Shannon is a big part of that answer.

Messaging in the 1940s

Shannon was a mathematician who was concerned with messages traveling long distances, like a radio transmission from New York to London. The messages were often garbled, subject to interference, or failed completely. Shannon wanted to solve these problems, but there was no way to measure them.

Over time, he developed ideas that helped solve the messaging problem, but more importantly, established the basis for information technology.

He was the first to describe, in scientific terms:

Capacity - How much information a channel can carry

Error correction - How information can survive interference

Compression - How to transmit information efficiently

This seems like a feat of engineering, and it is. But if you look deeper, you can see big ideas that are the roots of computer science, cognitive science, and more.

The Big Idea

Information, to me, seems like something we say or learn; a human phenomenon. For Shannon’s work, please avoid thinking in human terms. Our focus here is NOT about meaning or content. It’s about a way to measure any kind of information in any context. Think of it like measuring the volume of a liquid: which liquid doesn’t matter.

To start, Shannon needed to define information. What is it? This is a deeper question than it appears because it’s not concrete.

To him, information exists when there is uncertainty that needs to be resolved. Imagine a radio operator listening for a signal to make a decision. Information is what resolves the decision.

Before the signal arrives: uncertain

After the signal arrives: resolved

What caused the change? Information

Shannon saw that signals can carry varying amounts of information. Some signals carry more information, some less. He wanted to quantify these differences.

How to Measure Information

When a signal is transmitted, the amount of information it carries is based on how rare or surprising it is. Here’s an example:

Imagine two weather forecasts. One says that it will rain in Seattle in November. That’s an expected signal that didn’t resolve anything for you. The signal carries little information.

The second weather forecast says it will snow in Miami in July. This is a complete surprise. The signal carries a lot of information because it was unexpected.

When a signal is already known (rain in Seattle), it carries less information because the probability is high. When it’s a surprise (snow in Miami), it carries more information because the probability is low. Surprising signals = more information.

This is the starting point: using probabilities to gauge the amount of information in a signal. If you know the probability, you can calculate and measure information.

A Formula and Bits

The genius of Shannon's work is finding a calculation that measures how much information any signal carries, based on its probability. He used the term “bit” to describe the units. When a signal has more bits, it carries more information. If you add up all the bits in a channel, you can test capacity, for example.

The formula he used is: Information = log₂(1/probability).

The key point is that it works. It reliably calculates information in terms of bits, which makes information measurable. This is the key to everything. Once information became measurable, the mind itself became scientifically measurable in a new way.

The Alphabet

Using Shannon’s formula, we can find the bits for each letter of the alphabet, which is based on the probability of the letter appearing in a sentence. The average number of bits for a random letter is 4.7 bits.

The letter “e” is common and expected. It has about 3 bits.

The letter “q” is rare and unexpected. It has 5-7 bits.

This means that the letter “q” carries about twice the information as “e” because it is much less likely to appear. In information systems, rare signals require more bits to represent efficiently. If you play Scrabble, this probably feels obvious.

Testing Humans

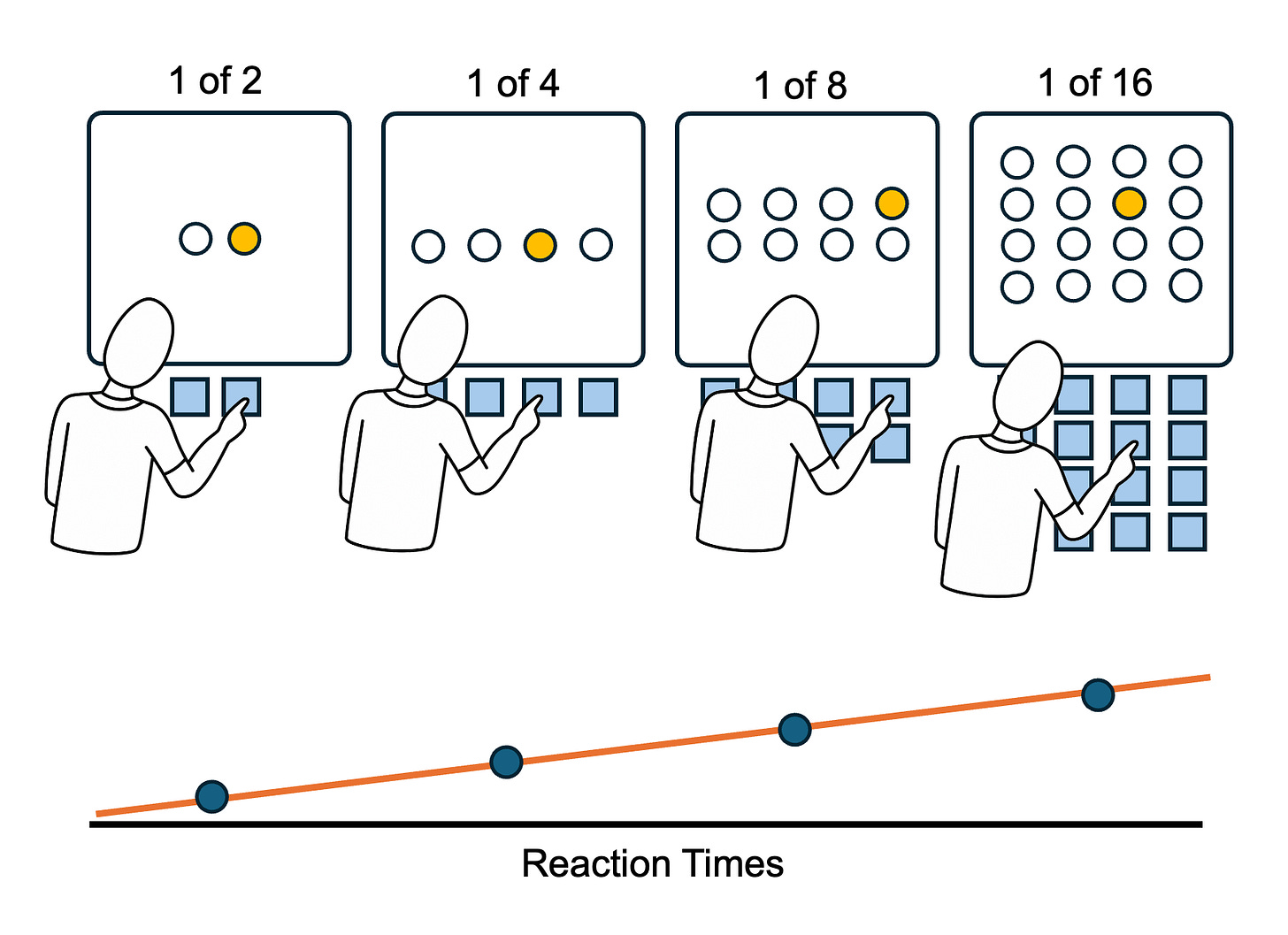

Shannon’s work also helped explain processes in the human mind. Let’s consider a real experiment conducted by William Hick, a British psychologist.

Imagine sitting in a lab in 1952 with a panel of lights in front of you, along with a set of buttons that correspond with each light. Your job is simple: when the light turns on, press the button for that light as quickly as possible.

First, you see a panel with two lights and two buttons. One of the lights flashes, and you quickly hit the button for that light. Easy and expected. Your reaction time is recorded in milliseconds.

Next, you see a panel with four lights and four buttons. One light flashes, and you hit the button.

Over multiple rounds, the number of lights doubles on each panel: You see four, eight, and sixteen lights. Each time, you race to press the button for the light that appears while Hick precisely records your reaction time.

Hick found something fascinating. Each time the number of lights doubled, reaction time increased by a precise amount. This held across people and situations. He had discovered something mathematical about the human brain. The reaction times were precise, predictable, and observable.

Back to Shannon

Hick’s experiment highlights the power of Shannon’s work. Each light represents uncertainty. Which one will flash next? Fewer lights mean more certainty, thus less information. This maps directly to bits calculated with Shannon’s formula.

Two choices — one bit of uncertainty.

Four choices — two bits.

Eight choices — three bits.

Sixteen choices — four bits.

Each doubling adds one bit. And each additional bit adds the same fixed amount of reaction time. The mind was processing information at a measurable rate, in bits per millisecond. This rate is part of how mental processing is measured today.

Shannon had given Hick a ruler. And the ruler fit the human mind precisely.

Why This Matters

Thanks to Claude Shannon’s work, information became measurable for the first time. Like mass and energy, the ability to measure it meant that it could be tested and used in experiments. Bits per millisecond works a lot like miles per hour.

Shannon gave psychologists a formal language for thinking about the mind as an information-processing system.

Next Up

Soon, we'll see how Shannon's bits provided a way for scientists to study the invisible mechanisms of the human mind by comparing it to a computer.