Sidebar: How I'm Thinking About, and Using, AI - Spring 2026

How AI has changed my work and perception of explanation as a skill

Artificial intelligence has become part of my life and a valuable tool for my work. In your shoes, I’d wonder: What comes from AI versus Lee?

I ask this about much of the media I see. In this spirit, I want to share how I’m thinking about and using AI in The Science of Explanation.

First: Are Explanation Skills Still Needed?

It’s easy to imagine AI being the world’s best explainer, capable of explaining almost anything at any level. This leads to a pivotal question: Are explainers and explanation skills really needed?

The answer is yes, and let me explain why.

First, ideas must work between people. There is a big difference between having knowledge and sharing it with clarity. The need to share ideas will remain in conversations, meetings, presentations, and more.

Second, explanation is a social activity. The best explanations depend on the human ability to sense the needs and capabilities of the audience. These signals, like body language, emotion, attitude, and context, help us adjust our message in real time.

When explanations work, they create social alignment and a shared sense of reality and context. This is what makes explanations work. Claude can provide answers, but it has no clue about the human signals on the other side of the screen. The feedback loop is missing.

Humans have to make communication work for other humans, and that’s the challenge. How do we overcome it? By understanding how the mind works.

Now let’s get into the nuts and bolts:

How I use AI

It seems fitting to start by asking Claude and ChatGPT how I use them.

Claude

ChatGPT

This rings true. AI, for me, is like having a smart research assistant who is always there to analyze and challenge what I’m writing. It forces me to constantly revise, reconsider, and reframe. I find this back-and-forth to be productive, even if it doesn’t know how I feel.

Perhaps the most valuable feature of AI is infinite patience. I can ask hundreds of dumb, probing questions, and it is happy to keep responding.

I Write the Drafts

I’ve published two books and written scripts for hundreds of explainer videos. I don’t claim to be an amazing writer, but my voice and style have become distinctive over time, and I value that deeply.

Today, I write the drafts of everything I publish. Once the draft is in place, AI helps me refine, but not replace, what I write. AI notices inconsistencies and contradictions that I sometimes miss.

What it doesn’t know is you, dear reader. That’s up to me.

Learning Humility

The Science of Explanation is a new perspective for me as a layperson. I am not only learning about scientific discoveries, but also how to write about science in a way that’s both clear and trustworthy.

Claude, in particular, has been a helpful partner. It consistently pushes me to write in terms of what will pass muster in the scientific community. It will ask:

You’re making this claim, but not backing it up with evidence. Where is the research that supports it?

I wish every person on the web had to answer this question before publishing.

AI has also helped me write from the perspective of scientific humility. This means acknowledging the limits of science and the findings of scientific experiments.

For example, there is a big difference between saying “This is true” and “This is likely under specific circumstances.”

It’s been a challenge to find the balance between simplicity and accuracy, and AI has become a sandbox for experimenting with the nuances of scientific explanations.

Problems

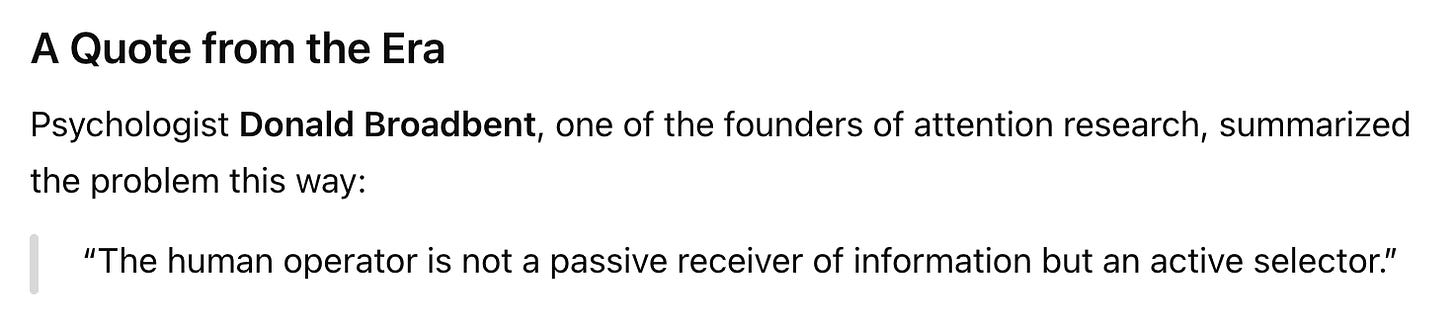

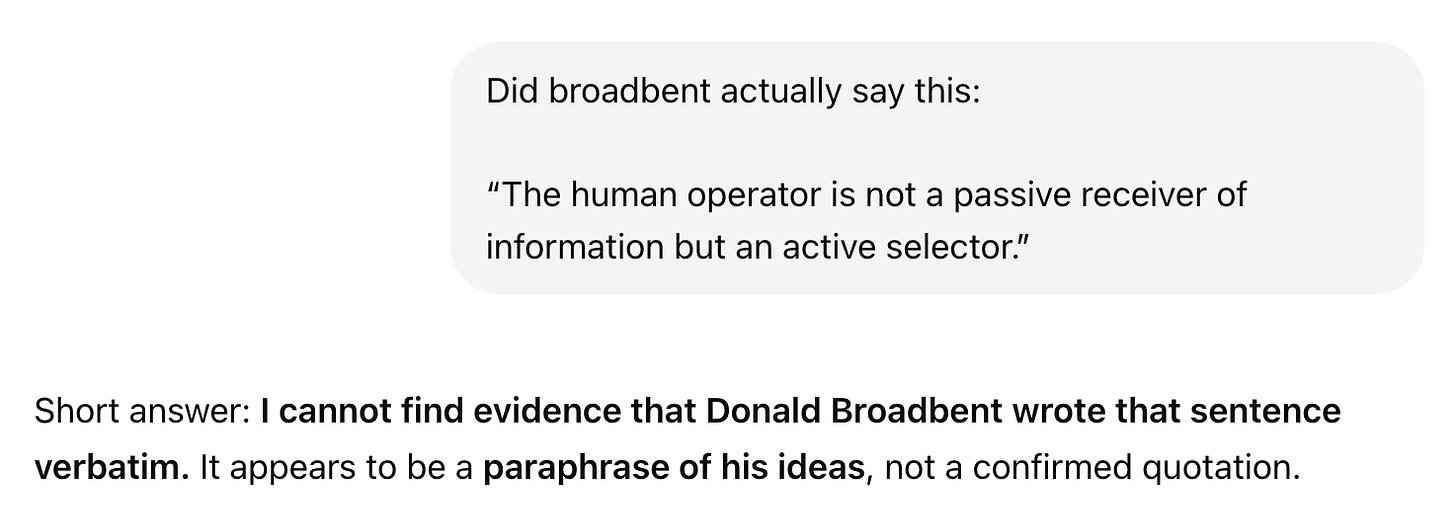

I’ve been bothered by a few consistent issues. The first is ChatGPT’s confidence in providing quotes. Here’s a real example:

WTF? ChatGPT called it a quote and put it in quotation marks. But then can’t find it? This is… not optimal.

Saving Time with Image Production

I’ve drawn thousands of original visuals for Common Craft videos, which all share a specific style. However, they are time-consuming to produce. I have to ask: Is drawing the best use of my time?

ChatGPT has been a decent partner in quickly creating images in Common Craft style. When it works, it’s useful.

Here’s an example of my drawings in Common Craft style (not AI)

Over time, I taught ChatGPT to use Common Craft style as a template for new drawings. I outlined the specific attributes in text and then attached examples.

Here are examples created by ChatGPT:

Videos

Making animated videos is second nature to me. I’m sure AI could help, but my process gives me ultimate control, and that’s something generative AI lacks.

The videos in my posts are created by me, without AI. The visual series below is designed and animated in PowerPoint, for example. Watch a video.

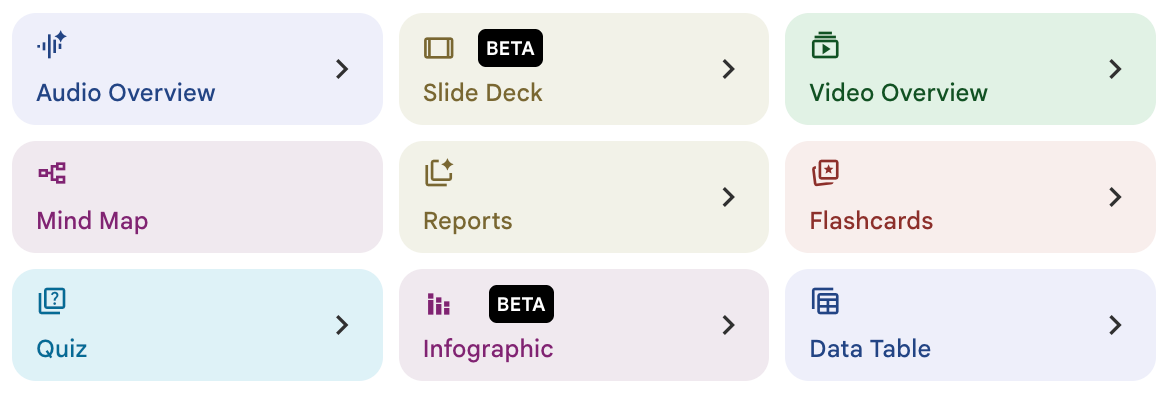

NotebookLM as an Offboard Brain and Media Creator

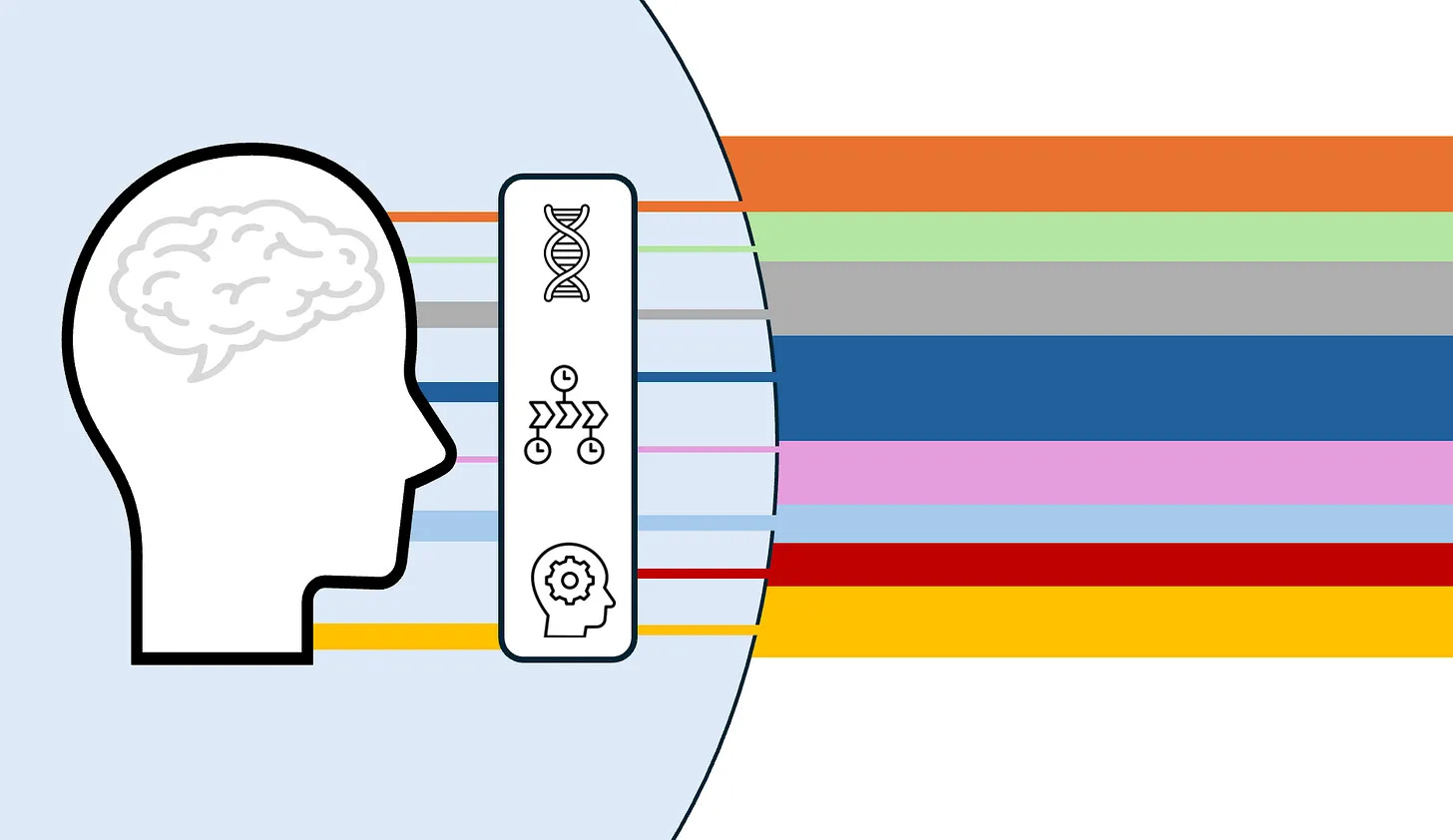

One of my favorite AI tools is NotebookLM, by Google. It’s different because it only focuses on the materials, or “Sources,” you provide to it.

For example, I could upload 50 PDFs (or YouTube videos, audio files, etc.) to a Notebook and ask NotebookLM to summarize them and provide relevant quotes. Then, the results link back to the exact passages in the materials I shared.

Today, I have a Notebook that tracks every post on The Science of Explanation. NotebookLM reads the posts and allows me to ask questions and see the overall content from a new perspective.

What blows my mind is the “studio” features, which turn the Notebooks into media.

AI Made This Video

The video below was generated by NotebookLM, based on Science of Explanation posts. The tool offers a “paper craft” option, which feels like it was inspired by Common Craft style.

The script is too long, the visuals are too cluttered, and nothing moves. But for a video made in a few minutes, it’s impressive.

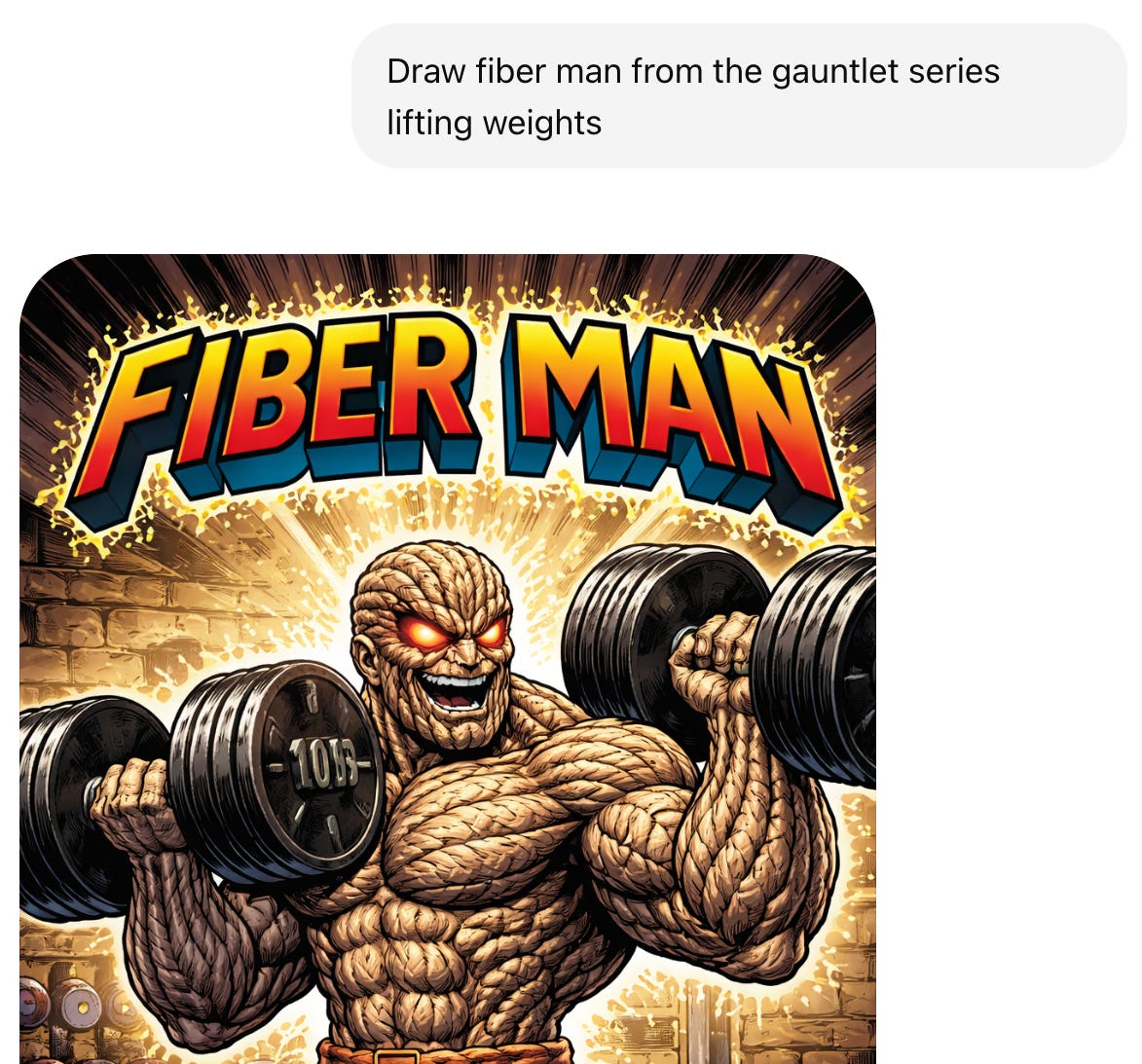

You’ll PROBABLY Get What You Want

ChatGPT’s drawings can be unpredictable. Just when I think a style is defined, it goes off the rails. While writing this, I asked ChatGPT to draw Fiber Man. Here was the output:

Nope. Not even close. I pushed further:

On the next try, it did better. I said nothing about “Cognitive Load,” but it’s an accurate inference:

Here’s how I think of generative AI: You’ll probably get what you want. The models are “probabilistic,” meaning that the results are interpretations and not determinations, like a calculator.

Looking Ahead

AI is probably going to change society in unpredictable ways, both good and bad. It could cure cancer and then wipe out jobs. I’m worried about the existential threats, but I have no way to alter the course of these new technologies.

What I can do today is focus on something even more fascinating: the human mind, and how it works. In this focus, I am using AI consistently, but all the while, I’m reminded that it’s not a person with feelings and a brain. It can never feel joy, embarrassment, or the feeling of human connection. When it comes to communication and explanation, humans are what truly matters.

If You Enjoyed This Post

Please consider hitting the ♡ button below (it helps others find this post)

I’d love to hear from you. Leave a comment, and we can chat.

Know someone who would enjoy this? This post is public, so feel free to share.

Another excellent article. Thank you. BTW, I’ve also have Chat give me a quote that upon a bit of probing was “Well, that’s what he would have said.” Not good and not helpful.